How We Kept Path to Hope Online for 14 Days Straight

2026-04-29

A (mostly) non-technical story about servers, storms, and the kindness of the streaming community.

Musical accompaniment: Tickle Foot Servers, The Musical

The Road Ahead

Twitch streamers Nordic Noob and Roamin Nomad, with the support of the Risk: Global Domination Twitch community, are raising awareness of Morquio Syndrome and fundraising for Fighting for Freya. They've always had big ideas to fight this rare genetic disease.

The cross-country Path to Hope road trip was already on the radar when they decided to test the waters. In the fall of 2025, they took a three-day trip to North Carolina and streamed the drive. The plan was exactly what you would expect: point a camera at the windshield, hit "Go Live," and let the miles roll by. For a short trip, it worked well enough. But anyone who has ever tried to keep a stable internet connection in a moving vehicle knows that "simple" and "reality" are rarely on speaking terms.

Despite the technical challenges, the drops, the glitches, and the buffering, the North Carolina trip proved something important: there was genuine community interest in this content. People wanted to ride along. It also gave us a clear list of technical problems to address before the big trip. By the time planning began for the April 2026 cross-country journey, we knew the community deserved better than a pixelated slideshow. A small crew of us volunteered to build the infrastructure that would carry the stream from coast to coast. We had no idea our best planning would lead to two weeks of improvisation, stubbornness, and more than a little luck.

Preparation: Dreams and Diagrams

Choosing the Architecture

The fall 2025 trip had made one thing painfully clear: when the truck hit a poor signal area, the Twitch stream would drop. The video would glitch, buffer, or vanish entirely until the connection recovered. It was frustrating for viewers and stressful for the streamers.

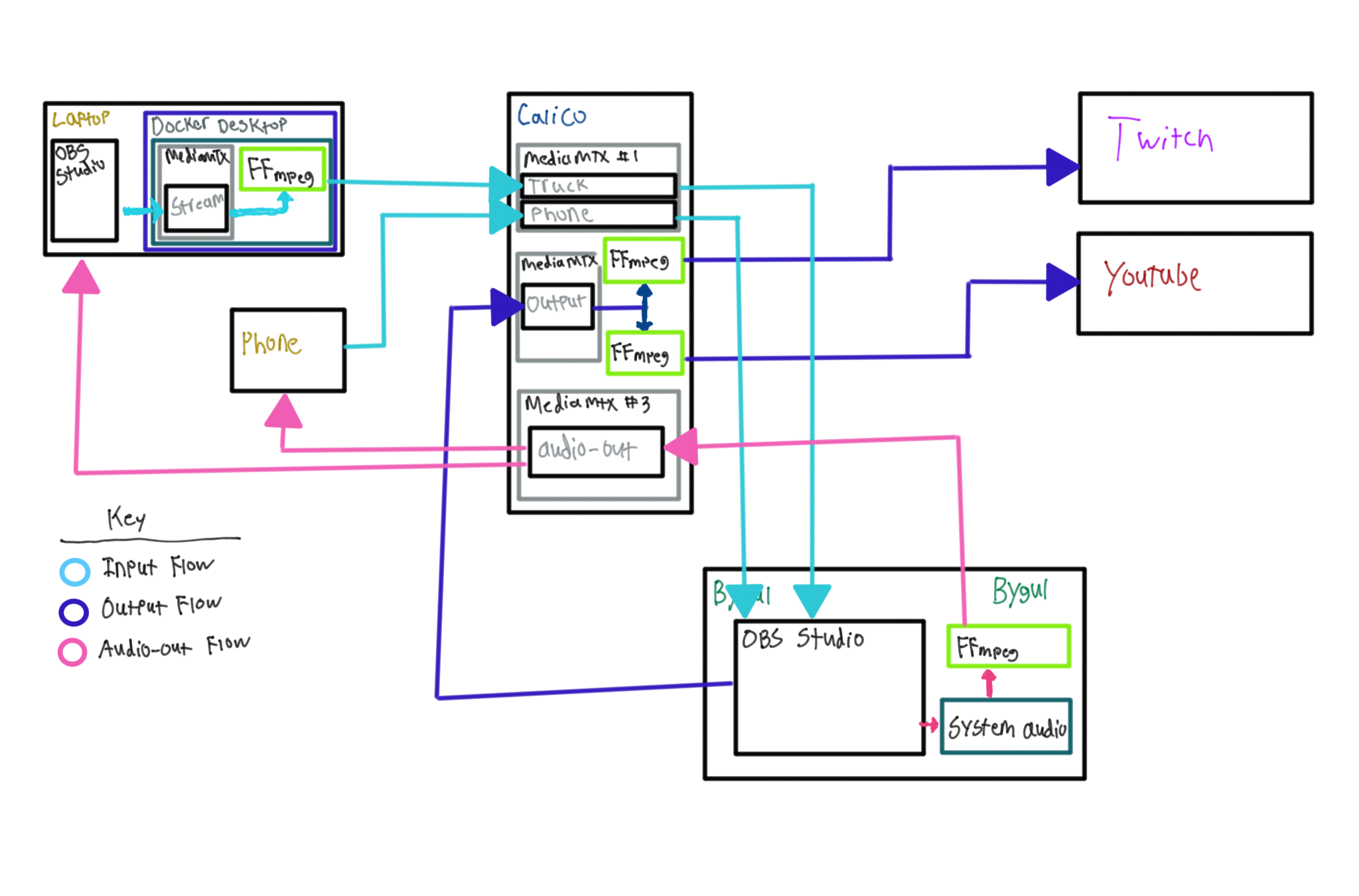

That is when The Statics (The Static Mage and The Static Brock) proposed a different approach. Instead of the truck streaming directly to Twitch, the truck would stream to a server of our own. The server would mix in the music and overlays and handle the connection to the platforms, so even if the truck dropped out for a minute or two, the stream itself would stay live. Viewers would see a brief hiccup, not a full disconnect.

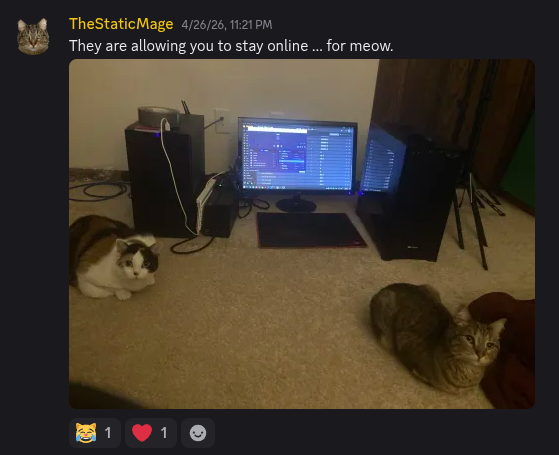

We started the way most technical projects start: with a Discord channel and the belief that we could plan our way out of every problem. The first idea was to use a spare PC sitting in Mage's house. It was sufficiently powerful, it was free, and it was already there. But we had concerns. Residential power flickers. Residential internet goes down. Cats sometimes step on the power button of PCs. We quickly determined that if we were going to support a multi-week journey, we needed something more reliable than a machine on the floor of the office.

In the end, we preferred the idea of a server in a proper data center, a facility with redundant power, commercial bandwidth, and none of the vulnerabilities of a home setup. It was the safer bet. Or so we thought.

Experiments in the Cloud

Our first instinct was to reach for the biggest name in cloud computing. We spun up an instance on AWS, ran some bandwidth calculations, and ran a few synthetic tests. The performance was acceptable, but the projected cost for a multi-week, always-on stream with significant outbound bandwidth made us wince ($750.00). We were a group of friends doing this out of pocket and out of love. We needed something that would not require a second mortgage.

(For technical details: see Our Initial AWS Experiments)

Purpose-Built Services

We also looked at services built specifically for IRL streamers. The idea was appealing: a company that understood the exact problem we were trying to solve. No need to cobble together our own server, no need to worry about bandwidth negotiations, just pay a monthly fee and stream through their managed platform.

The catch was that these platforms are mostly designed for a very specific kind of IRL streamer: someone walking around with a single camera and a backpack full of cellular modems. Our setup was different. We needed at least two simultaneous ingests (one from the truck and one from a phone) but most of these services only supported a single stream. They also provided servers that run on Windows, which became an immediate non-starter since all of our custom tooling, automation, and server scripts were written for Linux. Rebuilding the entire software stack for Windows was not on anyone's wish list.

Then there was the feature gap. These services offered basic overlays and simple chat bots, which was fine for a standard walking-around stream. But we were doing genuinely complex things with Firebot: custom redeems, camera switching, dynamic overlays driven by live data, music playing, AFK slideshows, and integrations that these platforms do not readily support. We would have been paying to paint ourselves into a corner.

Finally, the cost. The cheapest option we found that might have worked was $269 per month. We needed at least a month to set up and test, and some providers had minimum commitments or installation fees on top of the base rate. We were staring down several hundred dollars in sunk costs before we even knew if the service could handle what we needed. In the end, the combination of limited ingests, Windows-only infrastructure, shallow feature support, and steep pricing made the purpose-built route feel like an expensive compromise rather than a real solution.

The Budget Host

After some searching, we landed on a less expensive hosting provider that advertised the specs we needed at a price that did not make our wallets cry. It would run about $100 for the two-week trip, plus about $50 during the lead-up period to build and test everything out. We will not name them, partly because we do not want to give them the attention, and partly because what happened later speaks for itself. At the time, though, they looked like the perfect compromise: dedicated resources (or so they claimed), good reviews, a friendly and prompt support chat, and enough bandwidth to handle a modest audience.

This server was named "Valhalla" in keeping with the theme of the Nordic Noob stream.

Assembling OBS Like a Puzzle

While the server discussions were happening, Nordic Noob was back at the truck, building out the OBS scenes and wiring up multiple cameras. It was less like configuring software and more like assembling a puzzle where half the pieces were from different boxes. Roamin Nomad's wife designed graphics that met specific pixel dimensions supplied by The Statics, and Nordic Noob positioned the cabin camera overlay at the exact coordinates where it would blend naturally with a bottom bar from the server conveying location, distance, and fundraising statistics. Viewers would not even notice that the scene was generated from two different OBS instances thousands of miles apart and involved at least five different data streams somewhere between the truck and their browser.

The scenes evolved daily as new ideas surfaced and old ones were abandoned. By the time we were "done," the OBS profile had dozens of sources to make any person dizzy. Along with over 284,000 lines of custom code. In addition to automated server provisioning supplied by Brock, for which school credit was given!

In the meantime, Nordic Noob uploaded over 1,200 songs from his licensed music service (including a number of Christmas songs, of course!) and Roamin Nomad supplied photos of Freya and her cats for the overnight slideshow.

Pre-Stream Testing: The Dress Rehearsals

Test Stream Zero: Benchmarking

About a month before the planned launch, we ran our first private test stream. The goal was purely technical: could the server handle the bitrate? Would the truck's connection hold up? Nordic Noob headed for his local Waffle House, pointed the camera at the parking lot, and started the stream while we watched the graphs. The server ingested the feed without complaint and the audio was in sync. He headed downtown and, for about 15 minutes, narrated "red light" and "green light" as traffic signals changed to confirm that the audio stayed in sync. Everything seemed quite stable. We patted ourselves on the back and called it a night.

Test Stream One: The First Live Attempt

The first test stream was broadcast live on Nordic Noob's Twitch channel and also sent to a private YouTube feed. The usual community members showed up to give feedback. Was the audio balanced? Did the overlays block anything important? Did the TTS anti-troll filter work well, or perhaps too well? How did it feel to watch a stream where "content" was mostly driving around town, including some places where we knew the internet connectivity would be rough? Did the map widgets we worked so hard on actually add value? We identified several areas of improvement, but the feedback was overwhelmingly positive. People loved the vibe. The sense of shared adventure was palpable.

Test Stream Two: The Viewer Experience

The second test stream was more about the human side. This time we enabled a number of our redeems, including the ability to trigger a Christmas song, switch the camera view, and make Nordic Noob order food from a drive-thru with an unrecognizable yet hilarious Australian-British accent. Once again, things worked well and anticipation was building for the main event, and OBS had at least 33% more headroom before viewers would ever experience lag.

We went to bed feeling confident. That feeling would not last.

Pre-Stream: The Cracks Begin to Show

Network Drop-Outs

In the days leading up to the trip, we started noticing intermittent network drop-outs from the Valhalla server in the data center. A minute here, thirty seconds there. This should never happen with a professional hosting setup, but we had metrics to prove the slowness we felt. We reached out to our budget host with questions and data, and got a response that they would "ask" and tell us when they heard something. Huh? Ask who? When would we hear back? This was highly concerning.

Three days later, we got another message from them. Are we still noticing the drop-outs? No explanation, no investigation, no details. It was unclear what, if anything, they had been doing during that three-day period to investigate the issue. The response we got was vague, dismissive, and dripping with the kind of non-answer that makes your stomach sink.

We listened to that feeling and decided then and there that we needed a backup plan. On Sunday, Mage reinstalled an operating system on his 2018-era PC and Brock applied his automation to set up the operating system and software. Data was synced from the cloud server to the one in the office. Keeping with the Norse theme, we named this server "" after one of the cats that pulled Freya's chariot. It would be nice to have as a backup plan, but we wouldn't need it... right?

In Norse mythology, the two cats that pulled Freya's chariot are Trjegul and Bygul. Learn more.

The Morning-Of Failure

On the morning that the stream was set to go live, we clicked the button to scale-up from 4 vCPU to 32 vCPU on the Valhalla server. (We scale down the server to 4 vCPU when it wasn't in use because that's $0.03 per hour, versus $0.29 per hour at 32 vCPU. This is how the cloud works.) We had done this many times before, for each private and public test stream and multiple other times for testing.

But this morning was different. The console presented a vague error and the server would not scale. We put in yet another support ticket and the host promised to look at it. For the next tense six hours, we could see entries in the log showing various administrators trying to scale the server. All attempts were unsuccessful.

Eventually, days later, they admitted the truth: they did not have the capacity in that data center to scale us up. They had sold us a promise they could not keep, and they had the audacity to only tell us after we had already missed our launch window. We don't know if this was intentional, or if it actually took them that long to figure it out.

The Storm Shelter Stream

With the cloud host dead in the water and the stream ready to go live, we pivoted. Bygul was quietly purring in the office, ready to pull the chariot for Path to Hope after a rich past life of streaming, video encoding, and hosting the occasional over-engineered side project. We decided, with no small amount of desperation, that this machine would become the new streaming backbone.

We spent the afternoon configuring an ingest relay in a more reliable cloud (DigitalOcean) and praying that the predicted severe storms in the Midwest later that day would not knock out the power or internet at home. It was rough, improvised, and held together with hope. But it was something.

On the night of Tuesday, April 14, as we prepared to start the stream, severe weather rolled through our area. Tornado warnings blared. We gathered the cats and retreated to the storm shelter in the basement. And from that shelter, huddled with laptops and phones, we pressed "Go live."

Day One: The Real Launch

Up at 3:00 AM

We woke up before dawn on the morning of Wednesday, April 15. We were both up at 3:00 AM to make sure the server had survived the night, that the connections were stable, and that Nordic Noob and Roamin Nomad would not be greeted with errors when they started the truck engine. The pre-dawn hours were quiet, methodical, and tense. Every log line was scrutinized. Every fluctuation was noted. When the truck finally started rolling and the stream came online, it felt like the first real breath we had taken in twenty-four hours.

Building a New Home: Trjegul Rises

We knew we could not rely on a residential connection for the entire trip. The storms that had sent the family to the shelter were not a one-off. Friday was forecast to bring more severe weather near the Mage household. If the power or internet went down, the stream would die.

While we were not particularly pleased with our current host, we did have a verified account with them and they had spare capacity in their Dallas data center. With server automation in hand, we built a new server, codenamed "", named after the other cat that pulled Freya's chariot. We hoped that the redundant power, commercial bandwidth, and, hopefully, fewer tornadoes would be a blessing for the stream. On Thursday night, while the voyagers camped in Arizona, we performed a cutover -- stopped streaming from Bygul, started streaming from Trjegul. Viewers barely noticed. The stream hiccupped for a moment, then continued on its new path. We allowed ourselves to believe the crisis was over.

The two cats that pulled Freya's chariot are Trjegul and Bygul. Learn more.

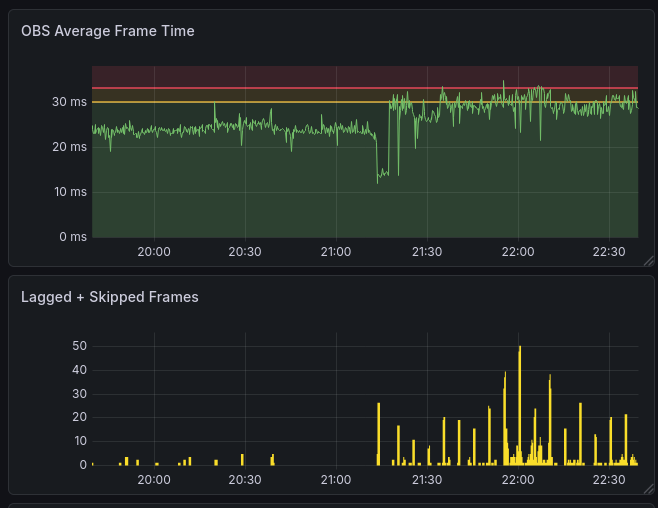

Trjegul was built on the largest instance that this host offered in Dallas, but it had 24 vCPUs, fewer than Valhalla's 32. Friday and Saturday, we nervously watched the dashboards. Rendering time hovered around 25-30 ms per frame, leaving only about 10% headroom to the 33.3 ms threshold where lag would be visible to viewers. It stayed within tolerable ranges as the voyagers headed to California, walked around Los Angeles, and made their way up the coast.

Saturday: History Repeats

The Noisy Neighbor

The data center server performed admirably through Friday and into Saturday. But as Nordic Noob and Roamin Nomad closed in on their campground just south of San Francisco, the stream quality began to degrade. We could see and hear buffering live on the Twitch stream. Our metrics showed the culprit: the frame generation time was now averaging above 30 ms and spiking above 33. Other metrics showed why: the "steal" percentage of CPU utilization had spiked. This indicated that a "noisy neighbor" had moved in on the same physical host, consuming resources in a way that impacted our instance. This is not supposed to happen with the advertised "dedicated" CPU resources, but the trust we had in the hosting company had long since evaporated. It's the kind of problem that is maddening because it is based on misrepresentation and completely outside our control.

Compounding the issue, the network drop-outs had never fully gone away. Our logs showed two network drop-outs since we migrated to Trjegul. Both occurred overnight, so it's unlikely that anybody noticed, but our ever-vigilant monitoring caught them.

The noisy neighbor was the last straw, so we made a decision born of exhaustion and paranoia: we would switch the stream back to the Mage household server. The weather pattern had stabilized in the Midwest, and Bygul was ready to pull the chariot once again. The cutover was quick. The stream stabilized. Frame generation time dropped to less than 4 ms, thanks to Bygul's GPU.

Trjegul Goes AWOL

We woke up the next morning to the kind of notification that makes your blood run cold. Around 12:40 AM local time, the data center server (Trjegul) had gone completely offline. No warning. No email. No status page update. Just... gone.

Even though we did not plan to stream from Trjegul, we still wanted to keep it as a backup. Mage filed a support ticket immediately with the budget provider, complete with network diagnostics and traceroutes showing the server was unreachable from multiple vantage points. The response, when it finally came, was staggering: the server would be offline for 1-2 more hours because it was being moved from one data center to another.

Mage has been involved in multiple data center moves over his career. Some were highly formal affairs with professional movers, rigid checklists, and months of planning. Others were less formal, involving physically driving servers across town in the back of a car. But in every single case, there was extensive planning, customer communication, and scheduled maintenance windows. To move a production server without informing the customer beforehand far surpasses unprofessional -- it is unconscionable.

We would have been left furiously scrambling, but instead were relieved that we had switched back when we did. Trjegul finally came back onto the network about 18 hours after it had dropped off. Thanks to the diligent efforts of Bygul, nobody actually noticed the downtime.

Bygul: The Old PC That Would Not Quit

At the very beginning of our planning, we had thought of using exactly this PC in exactly this office for exactly this purpose, but we had ruled it out based on the promises of the cloud. As it turned out, this PC ran the stream for 12 out of 14 days. We continued to call it Bygul. Nordic Noob, with characteristic charm, took to calling it MageCloud on stream. Both names stuck, in their own way.

This line from Mage's song "Tickle Foot Servers, The Musical" nails it perfectly: "Better hold, no more reruns. We're out of Norse cat names to run!" (Wondering about ?)

"Tickle Foot" originates from the show New Girl, where it represented a playful joke or prank. During the North Carolina trip, while Nordic Noob was shopping in Wal-Mart, Mage and Brock coached Roamin Nomad through adding "Tickle Foot" to the end of every TTS message. This stuck and has now come to represent a running joke within our community.

Bygul was not in a data center. It did not have redundant power. We covered the power button so Mittens would not step on it and take down the stream (repeatedly reminding Nordic Noob that if he didn't keep his stream feline friendly, we might remove that safeguard). More importantly, the situation was now under our control. We could see the logs in real time. We could see it running without opening a ticket. And assuming that power and internet held, it would run like a champ.

Spokane: Camera Switch Redeems

Viewers had asked for the ability to redeem channel points to switch camera angles, a fun way to interact with the stream and break up the monotony of highway footage. This was working intermittently, hampered by an unreliable network cable and occasional interruptions between the computer and the ATEM switcher, which controlled which camera was live.

While Nordic Noob and Roamin Nomad were hanging out with Trendy, a friend and fellow streamer, we were remoted into the truck's systems to get the camera switch redeems working reliably again. Mage created a new middleware between our stream bot and the ATEM and tested it remotely while the streamers were off having dinner. By the time the travelers returned, the feature was back.

The Truck: OBS and the Missing Internet

The Crash Bug

The truck setup had its own technical gremlins, beyond an overheating GoPro, a suction cup that didn't always hold, and frequent internet drops. OBS Studio, for all its strengths, has a long-standing bug: if it completely loses internet connectivity while streaming the SRT protocol, it crashes. Not a graceful disconnect. A full crash. In a moving vehicle relying on cellular towers and satellite dishes, complete connectivity loss is a "when," not an "if." Especially when driving through the redwood forest, valleys, or tunnels.

Every time the truck passed through a dead zone, there was a chance OBS would die, requiring Roamin Nomad to restart the software manually. It happened often enough that he actually got pretty good at it. While it was a joke in the chat, it was less funny for the people in the truck.

The Colorado Donut Fix

Nordic Noob and Roamin Nomad stopped for donuts at Sweet Coloradough in Glenwood Springs, Colorado. Unbeknownst to those watching the stream, we were once again remoted into the truck's onboard computer, this time to solve the OBS crash problem before the tunnels of Glenwood Canyon.

The solution was elegant in its simplicity: we set up a re-stream proxy using mediamtx running in a Docker container on the truck computer itself. Instead of OBS streaming directly to the remote server over the volatile internet, OBS would stream to the local Docker container. The container was always up, always reachable, and never crashed (except when Roamin accidentally restarted it once). The container process then handled connectivity to the outside world, reconnecting as needed. When OBS lost internet, it did not know. It was still talking to the container on the same machine. The container handled the chaos.

From that point on, OBS crashes stopped. Nordic Noob and Roamin Nomad got to enjoy their donuts in peace. And we added another tool to our ever-growing bag of tricks.

(For more technical details on the Colorado Donut Fix, see here.)

The Finish Line

By the time Nordic Noob and Roamin Nomad reached their destination, the stream had been running for fourteen days. It had survived three servers, two data center fiascos, multiple severe storms, countless network drop-outs, and one emergency configuration session conducted from a storm shelter.

The technology was held together by duct tape -- literally a roll of duct tape on the power button to prevent a cat from powering it down. Nordic Noob developed a new appreciation that Mage's occasional (ok, near-continuous) "grouchiness" toward technology is actually the years of experience and wisdom needed to pull something like this off. And it worked. The stream never died. The community showed up every day, watching the streamers get chest waxes and checkerboard haircuts, take a cold plunge, and dance, dance, dance.

On a more serious note, the stream featured multiple leading Morquio researchers, visited a woman who has lived with Morquio for over 40 years, and let Freya get some airtime playing Minecraft. Chat shared the journey as if they were in the passenger seat. We raised over $22,000 for Morquio research.

Path to Hope was never about the servers. It was about the people on either end of the connection: the streamers willing to drive across the country for a cause, the viewers who tuned in to be part of something bigger, Freya, who bravely battles Morquio every day, and the friends who refused to let a little thing like a tickle foot hosting provider stand in the way.

The same exact technical setup? Never again.

The same cause? In a heartbeat.